Table of Content

TABLE OF CONTENTS

Overview

I often struggle to explain the value of ontologies to my clients. The word ‘ontology’ itself sounds complicated, academic, pompous, bombastic, and irrelevant. For that matter, the word bombastic always struck me as, well,

. . . bombastic.

Nevertheless, when you try to look up ‘ontology’ in everyone’s favorite dictionary, err, google, you get an even less helpful definition:

NOUN- the branch of metaphysics dealing with the nature of being.

- a set of concepts and categories in a subject area or domain that shows their properties and the relations between them.

Even if someone understood approximately what an ontology was after reading these definitions, they would probably still scratch their heads wondering why any of this really matters. So, you see, I need a way to convey a more visceral feeling for the concept.

Let’s start with my own intuitive definition: An ontology is essentially a frame of reference that supports reasoning. It is the set of rules and facts that describe the way a system works and, in turn, supports our ability to predict how the system (and all its individual components) would likely behave in response to various internal/external stimuli.

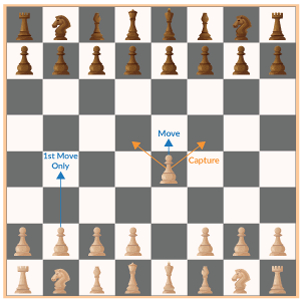

For illustration, if we’re talking about a game of Chess then the ontological model of the game expresses its own rules and facts. In chess a pawn moves one square forward under most circumstances, except on its first

move where it has the freedom to move two squares forward, and except when it has the opportunity to eliminate (“kill”) an opposing piece diagonal to it. If we observed enough chess games, without knowing anything about the rules of chess, we could readily infer the rules of how pawns move and play.

Likewise, any one of the other pieces on the board could be readily understood, if we watched a sufficient number of games. Knowing how the pieces play, is a very basic requirement before we can start to do things like formulate strategies, or predict the outcomes of chess games. While we can certainly do both without knowing any of the rules, it is almost certain that if we knew the rules, we would do a better job in determining the most probable outcome of a given move, or even an entire game. So, an ontology is a way to explicitly capture and use domain knowledge.

At an even more fundamental level we can say that an ontology helps us to nail down the definition (or nature) of each entity in a given system. In the chess example above the nature of each piece is largely determined by its initial position on the board and/or its degrees of freedom of movement on the board (forwards, backwards, sideways, diagonal, etc.). Although we use shapes to differentiate between pieces, the shape is really just for human convenience and mostly redundant. There are situations where, under certain contexts a thing will behave one way, and in other contexts it will behave in a completely different way. This confusion of circumstances gives rise to different interpretations of the nature of the thing itself. For instance, when two people look at the same data and they come to completely opposite conclusions, it is usually because they have a different ontological model of what they are looking at. In such situations the name of the game is to try and figure out which ontological model more accurately reflects the “true rules of the game.”

Recently, I happened to revisit the interrogation scene [Spoiler Alert!] of “Lord Of War” and it struck me that it serves as a perfect illustration of how/why ontologies matter. Have a look at this short clip:

In this (5 min.) scene Yuri Orlov, an international arms dealer, is being interrogated by Jack Valentine, supposedly an Interpol agent that has been unsuccessfully trying to nail Yuri for years. In this scene Jack has Yuri dead-to-rights on multiple counts of various international arms embargo violations. Jack’s world-view and his expectations of what is about to happen to Yuri is very relatable, sequential, logical, and linear; it’s very believable. It reflects the simple world-view that most of the audience probably shared when they began the movie. So, they [the audience] *get* Jack. That is until Yuri drops a truth bomb that re-interprets the situation in a completely different way to arrive at the exact opposite conclusion regarding the expected outcome

So, what happened? Why didn’t Jack come to the same conclusion as Yuri, before Yuri explained himself? Both Jack and Yuri are viewing the situation with the same basic evidence, data, etc. However, Yuri has a deeper, perhaps clearer, insight into the workings of the world (its basic facts + rules), as reflected by his nuanced understanding of how geopolitics actually works. Jack lacks this subtlety in his worldview. In Jack’s [worldview] model, causes and effects have a simple direct relationship and things are straightforward: You break a law, you go to prison. In Yuri’s model, things are less straightforward when you factor in short vs. long-term objectives, interconnected and complex relationships between counterparties, and the various incentives of each of the players involved in “the game.” For instance, Yuri implies that he enemy of my enemy . . .” [is my friend]. He also implies that he [an arms dealer] serves a very critical function [that no publicly accountable gov’t wants to be seen doing]; i.e., however depraved Yuri may seem as a person, in the great geopolitical game of chess between nation-states, Yuri is, unfortunately, “a necessary evil.” And, as it turns out, Yuri is spot on.

The mind-blowing beauty of this scene is when Yuri begins his counterargument by stating explicitly [for the audience, using Jack as a proxy] that “I will be released for the same reasons you think I will be convicted . . .”

This sort of plot twist is extremely satisfying to watch and is a tool frequently used in film to underscore an unforgettable punchline to the story.

Within moments of Yuri’s explanation, the life in Jack’s face drains away as he comes to terms with his new reality. Jack knows in his gut that Yuri is right. In reality Jack and Yuri are very likely two opponents of equal intelligence (/capability), yet only one of them read the situation correctly, because only one of them understood the underlying (hidden) rules of the game.

Conclusion

In a world of predictive models run amok, exposed to the same basic data and sources, the supporting ontology is often the only thing that really matters in creating or sustaining a competitive edge. Because without the correct ontology, you will get the wrong answer even if your data is complete and/or robust.

We work with enterprises every day to help them discover and apply ontologies to their data, so they can make better decisions and understand the world as it is, rather than as they hope it to be.

Prad Upadrashta

Senior Vice President & Chief Data Science Officer (AI)

Prad Upadrashta, as Senior Vice President and Chief Data Science Officer (AI), spearheaded thought leadership and innovation, rebranded and elevated our AI offerings. Prad's role involved crafting a forward-looking, data-driven enterprise AI roadmap underpinned by advanced data intelligence solutions.

-2.jpg?width=240&height=83&name=Menu-Banner%20(5)-2.jpg)

.jpg?width=240&height=83&name=Menu-Banner%20(8).jpg)